Learning and Executing Generalized Robot Plans

This blog covers some underappreciated but powerful contributions from Fikes, Hart and Nilsson’s 1972 paper Learning and Executing Generalized Robot Plans [1]. But really, this is blog is an advert to our AAAI’26 paper [2] which showed that some of the ideas from the 1972 paper, namely lifting and regression, are so good that they can be used to learn policies still beat the current state of the art for planning.

| state-action | variable-object | |

|---|---|---|

| forward-execution | succession | grounding |

| backward-learning | regression | lifting |

There will be some undefined mathematical symbols and terms, we hope their meanings can be induced from the context.

For an agent to act intelligently, three essential cognitive functions are acting, planning, and learning.-- (Ghallab et al., 2025)

Such cognitive functions have been implemented in various forms. In today’s day and age, monolithic LLM/VLM/VLA architectures taking center stage due to their various, impressive demos resulting from scaling law hypotheses and on relying on very few assumptions to operate.

In this blog, however, we will look at a paper from the dinosaur ages whose ideas for acting, planning, and learning have withstood the test of time. The work in question is Fikes, Hart and Nilsson’s (FHN) 1972 paper Learning and Executing Generalized Robot Plans, or its accompanying project Shakey the Robot. Richard Fikes and Nils Nilsson are probably most well-known for their work on STRIPS and Peter Hart for the Hough transform for line detection in images.

STRIPS can be viewed as a fundamental planning concept for modelling planning problems. The 1972 work incorporates STRIPS but further introduces less commonly appreciated, yet arguably just as impactful and powerful, ideas for learning and acting. The aim of this blog is to revive and put a spotlight on these contributions, namely the introduction of lifting and regression in order to facilitate learning and generalization, and acting and adapting. To show that the ideas introduced in the 1972 are as great as I claim, we refer to results within our AAAI’26 paper [2] which show how these (very simple) techniques significantly outperform some highly engineered state-of-the-art PDDL planners across various metrics.

Planning

We won’t go into too much detail about planning and STRIPS as many textbook resources [4], [5] and blogs cover their definitions and use cases.

In our context, planning is a goal-conditioned, sequential decision making problem. Formal definitions for planning often exhibit an initial state, set of goal states, and a set of actions. A solution for a planning problem is a sequence of plans or state-action policy that brings us from the initial state to some goal state.

STRIPS: a formal language for modelling planning problems

So what is the major contribution of the STRIPS work in the context of planning?

To put simply, STRIPS introduced a general, formal language for modelling planning problems which has then evolved into the widely used PDDL language. STRIPS refined the idea of the General Problem Solver [6] which as the name suggests was to introduce a general problem solving machine. Specifically, it introduced the notion of declarative programming whereby one models a problem at hand in a language supported by the solver to be solved by such solver. In this way, one automates the problem solving process by requiring that one communicates the problem in an intelligible manner (i.e. via STRIPS and PDDL).

Parameterised actions defined via preconditions and effects

STRIPS introduced a principled way to compactly model actions via parameters and by describing them as a pair of preconditions and effects. We can define a STRIPS action by example with the following robot pick up action.

pre: robotAt(?loc), at(?obj, ?loc), gripperFree()

add: holding(?obj)

del: at(?obj, ?loc), gripperFree()

Here, we see that actions are parameterised via variables. In this case, we call the actions lifted. However, they can also be instantiated or ground with objects to create many possible action instances. For example, the robot can pick(cake, kitchen) or pick(dog, bed). Furthermore, we can define preconditions, telling us when an action is applicable, and effects, telling us what happens to the world when we act upon the action.

Learning

We will refer to learning as the process of acquiring knowledge from experience. In our context of planning and acting, the knowledge we acquire will help us plan and make future decisions more efficiently.

As an example, one can view cooking as a planning task where the goal is to cook a specified meal. The first time one learns to cook was probably a difficult endeavour (at least for me) as there were many actions and effects to keep track of, requiring much System 2 processing. For example, one needs to mindful to defrost frozen food and preheat the pan to ensure even distribution of heat and delicious cooking. However, the reasons for applying such actions become commonplace and obvious as we get used to cooking, such that we usually do these things on autopilot without any deliberative planning, eventually making cooking a System 1 process.

Triangle Tables: reusable macro actions for planning

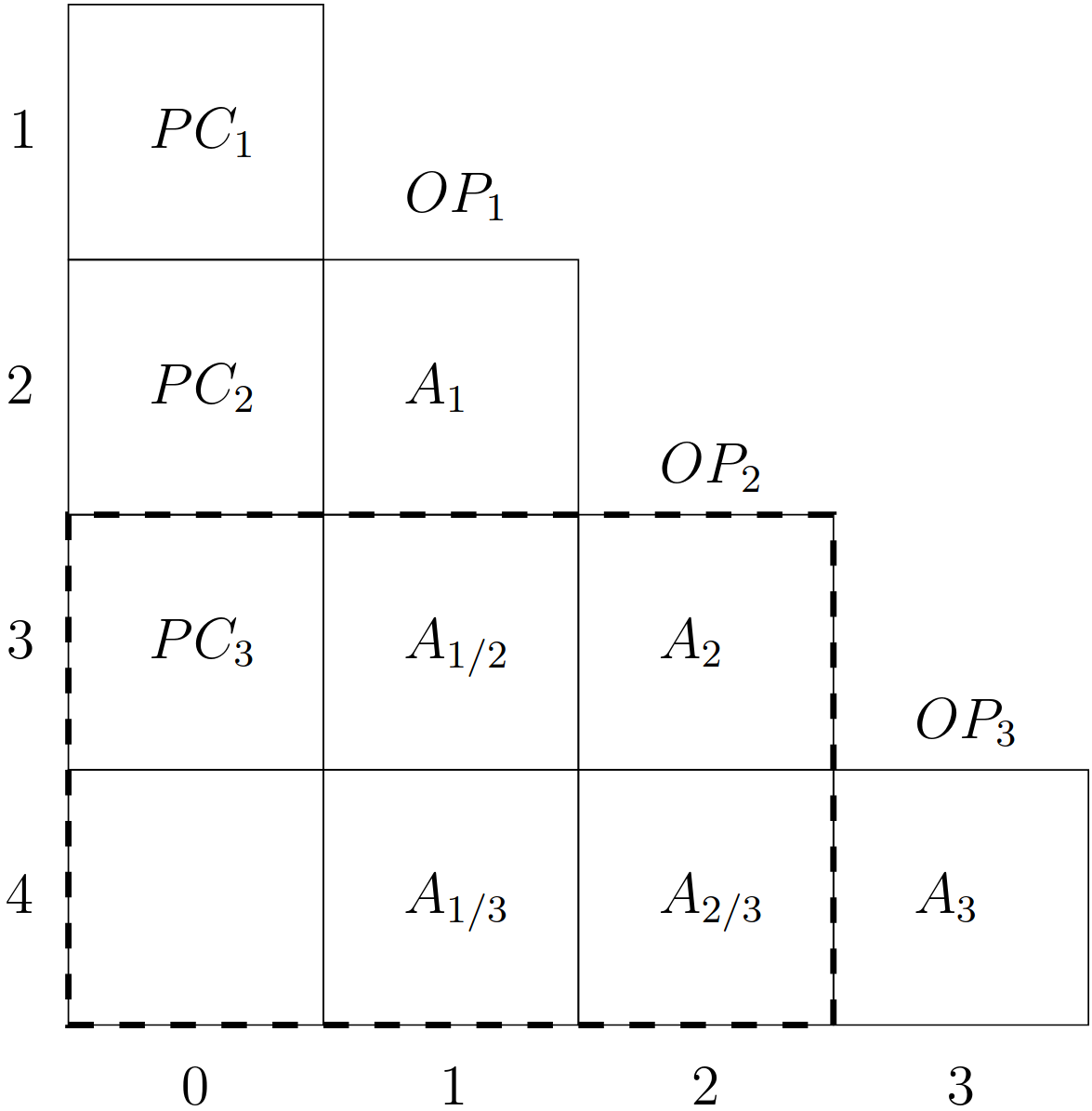

FHN manifested the idea of learning to plan from examples in their paper by representing learned knowledge as triangle tables. Given a sequence of ground actions \(OP_1, \ldots, OP_n\), a triangle table is constructed to determine the necessary conditions \(PC_i\) to apply a subtail of a sequence \(OP_i, \ldots, OP_n\) within a state. Such subtails are called macro actions as they are essentially a larger ‘macro’ action constructed by sequencing smaller actions together. Furthermore, they are lifted from the ground actions such that they can be instantiated and reused on new states.

We won’t go into all the details of triangle tables, Section 3.2.1 of Christian Fritz’s thesis [7] does a great job at explaining them. Instead, we will reframe triangle tables in a slightly different manner in the remainder of this learning section. This is because the intuition behind the construction and execution of a triangle table is not obvious upon first glance (at least for me).

Goal Regression

Christian Fritz’s thesis discovered a connection between triangle tables and the beautiful idea of goal regression that was formally defined after Fikes et al.’s work by Waldinger [8]. Goal regression, a.k.a. pre-image backchaining, is a technique for determining the minimal and sufficient features or conditions to apply an action to achieve a goal. As the names suggest, goal regression is simply the pre-image or regression of an action applied to a goal.

Policies as parameterised \(Condition(\vec{x}) \to Action(\vec{x})\) rules

Having defined goal regression, we now go into a bit more detail into how we will represent state-action policies and how to learn them via goal regression. We define a policy as a mapping from states and goals to actions, sometimes denoted as a function \(\pi: S \times G \to 2^A\) or as a distribution \(a \sim \pi(a \mid s, g)\). At this point, we will start shilling content from our paper [2].

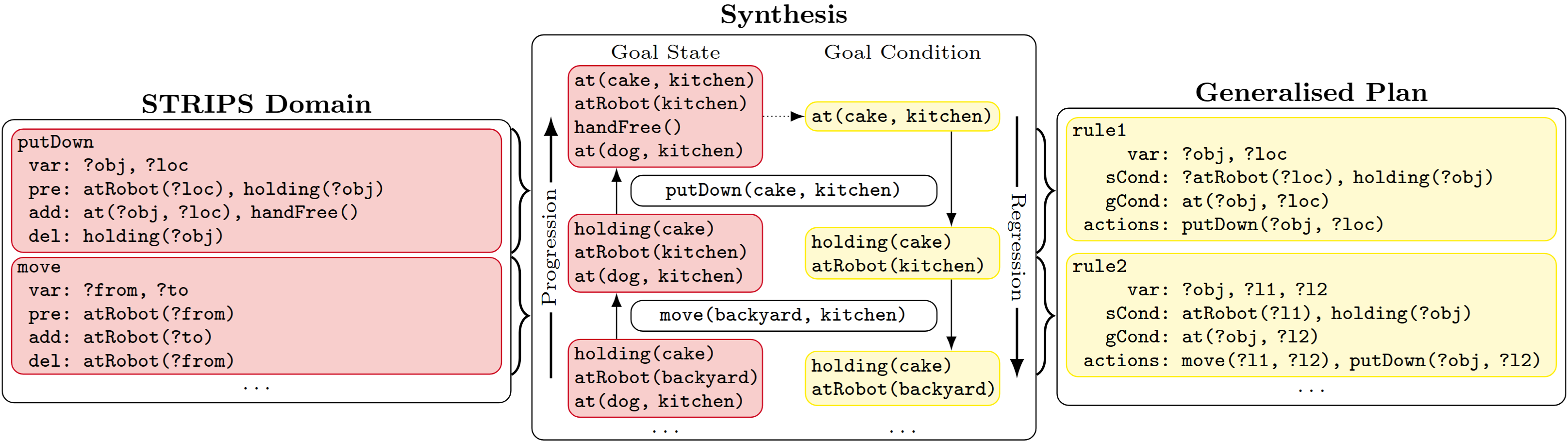

We need a way to represent a policy that can be reused across problems. In order to so, we are going to make use of first-order rules of the form \(Condition(\vec{x}) \to Action(\vec{x})\) where \(\vec{x}\) informally denotes a collection of existential variables. We will represent our policies as a set of rules denoted \(r = \langle \mathit{val}, \mathit{sCond}, \mathit{gCond}, actions \rangle\), where \(\mathit{val}\) represents the ‘value’ of a rule, \(\mathit{sCond}\) and \(\mathit{gCond}\) the state and goal conditions, and \(actions\) the sequence of actions (or macro action from earlier) to apply if the state and goal conditions are satisfied. Let’s look at an example policy for a simple pick and place domain.

\[\begin{aligned} 1: \exists x, l. \; {holding}(x) \wedge {robotAt}(x) \wedge {at}^{G}(x, l) &\to {place}(x, l) \\ 2: \exists x, l, l_1. \; {holding}(x) \wedge {robotAt}(l_1) \wedge {at}^{G}(x, l) &\to {move}(l_1, l), {place}(x, l) \\ 3: \exists x, l, l_1. \; {at}(x, l_1) \wedge {gripperFree}() \wedge {robotAt}(l_1) \wedge {at}^{G}(x, l) &\to {pick}(x, l_1), {move}(l_1, l), {place}(x, l) \\ 4: \exists x, l, l_1, l_2. \; {at}(x, l_1) \wedge {gripperFree}() \wedge {robotAt}(l_2) \wedge {at}^{G}(x, l) &\to {move}(l_2, l_1), {pick}(x, l_1), {move}(l_1, l), {place}(x, l) \end{aligned}\]The value numbers here represent the order with which to check the applicability of the rules to apply. The goal condition consists of the conjuncts in the LHS of the rules superscripted by \(G\), which is satisfied if it holds in the unachieved goals of the problem. The state condition consists of the remaining conjuncts of the LHS. The actions are the macro actions that get applied if the state and goal conditions hold for some assignment of variables.

With enough squinting, it is possible to see that such policies are similar to the aforementioned triangle table with minor differences, where the rule with value \(i\) corresponds to the macro action starting with \(OP_i\).

Executing Policies

We have the form of a policy now. How do we execute them? The answer is that we can leverage the connection between planning and databases [9], namely by viewing planning states and databases, and our policies as queries.

To do so let us define a grounding function which finds a rule-satisfying assignment of state objects to rule variables.

Then it should be quite straightforward how we can execute a policy: for any given state and goal, we try to ground the rule in ascending order of value to derive an (macro) action to apply on the state. Try to repeat this procedure until we reach the goal.

So where does the connection to databases and queries come into again? Well, the grounding can be implemented as a database query. In the code for our paper, we implemented this via Python’s built-in sqlite3 package (thanks to a suggestion by Augusto Correa from the planning and databases paper).

Learning Policies

Now we get to how we can actually create or learn such policies. For this blog we will follow the setup by FHN and synthesise policies (or triangle tables) from given plans.

For this learning subsection, we will now define a lifting function (conversely to the grounding function for executing policies).

We can then use the lifting operator to extract a policy from a sequence of ground actions. For example, given the goal condition \(\{at(dog, park)\}\) and sequence of actions \(move(kitchen, bedroom)\), \(pick(dog, bedroom)\), \(move(bedroom, park)\), \(place(dog, park)\), we can chain goal regression on the sequence of actions from the goal and lift the corresponding outputs to achieve the example policy above.

The following figure illustrates this in action (with a slightly different example)

Acting

This final section will be brief because I don’t have anything too substantial to write yet here. To be brief, the ideas within the previous learning section (regression, lifting, triangle tables and policies) can also be used for execution monitoring in the context of acting, corresponding to the PLANEX algorithm in the FHN 1972 paper. Execution monitoring and replanning is one way to handle closed-loop planning, namely by checking whether actions within the computed plan have been executed correctly and whether future planned actions can still be executed or need to be recomputed. This is necessary because plans are generated over abstractions and models which will always be lossy with respect to, or not capture all sorts of uncertainty in the real world.

Christian Fritz’s entire thesis is about efficient execution monitoring and ‘conservative replanning’ when given a model, and much more. Yet at its core, it again builds upon the key idea of goal regression.

References

- Learning and Executing Generalized Robot PlansArtif. Intell., 1972

- Satisficing and Optimal Generalised Planning via Goal RegressionIn AAAI, 2026

- Acting, Planning, and Learning2025

- Artificial Intelligence - A Modern Approach: the Intelligent Agent Book1995

- A Concise Introduction to Models and Methods for Automated Planning2013

- Report on a general problem-solving programIn Proceedings of the 1st International Conference on Information Processing, 1959

- Monitoring the Generation and Execution of Optimal PlansUniversity of Toronto, Canada, 2009

- Achieving several goals simultaneouslyMachine Intelligence, 1977

- Lifted Successor Generation Using Query Optimization TechniquesIn ICAPS, 2020

Enjoy Reading This Article?

Here are some more articles you might like to read next: